- Kubernetes install apache spark on kubernetes code#

- Kubernetes install apache spark on kubernetes password#

- Kubernetes install apache spark on kubernetes free#

Run these commands to copy the sample code into the newly created project and add all necessary dependencies. touch project/assembly.sbtĮcho 'addSbtPlugin("com.eed3si9n" % "sbt-assembly" % "0.14.10")' > project/assembly.sbt Run the following commands to add an SBT plugin, which allows packaging the project as a jar file. Navigate to the newly created project directory. When prompted, enter SparkPi for the project name. mkdir myprojectsĬreate a new Scala project from a template.

Kubernetes install apache spark on kubernetes free#

If you have an existing jar, feel free to substituteĬreate a directory where you would like to create the project for a Spark job. This jar is then uploaded to Azure storage. In this example, a sample jar is created to calculate the value of Pi. The jar can be made accessible through a public URL or pre-packaged within a container image. A jar file is used to hold the Spark job and is needed when running the spark-submit command. Push the container image to your container image registry./bin/docker-image-tool.sh -r $REGISTRY_NAME -t $REGISTRY_TAG push bin/docker-image-tool.sh -r $REGISTRY_NAME -t $REGISTRY_TAG build If using Azure Container Registry (ACR), this value is the ACR login server name. If using Docker Hub, this value is the registry name. Replace with the name of your container registry and v1 with the tag you prefer to use. The following commands create the Spark container image and push it to a container image registry. Run the following command to build the Spark source code with Kubernetes support./build/mvn -Pkubernetes -DskipTests clean package

export JAVA_HOME=`/usr/libexec/java_home -d 64 -v "1.8*"` If you have multiple JDK versions installed, set JAVA_HOME to use version 8 for the current session. git clone -b branch-2.4 Ĭhange into the directory of the cloned repository and save the path of the Spark source to a variable. The Spark source includes scripts that can be used to complete this process.Ĭlone the Spark project repository to your development system. Build the Spark sourceīefore running Spark jobs on an AKS cluster, you need to build the Spark source code and package it into a container image. See the ACR authentication documentation for these steps. If you are using Azure Container Registry (ACR) to store container images, configure authentication between AKS and ACR. az aks get-credentials -resource-group mySparkCluster -name mySparkCluster

az aks create -resource-group mySparkCluster -name mySparkCluster -node-vm-size Standard_D3_v2 -generate-ssh-keys -service-principal -client-secret Ĭonnect to the AKS cluster.

Kubernetes install apache spark on kubernetes password#

az ad sp create-for-rbac -name SparkSP -role Contributor -scopes /subscriptions/mySubscriptionIDĬreate the AKS cluster with nodes that are of size Standard_D3_v2, and values of appId and password passed as service-principal and client-secret parameters. After it is created, you will need the Service Principal appId and password for the next command. az group create -name mySparkCluster -location eastusĬreate a Service Principal for the cluster. If you need an AKS cluster that meets this minimum recommendation, run the following commands.Ĭreate a resource group for the cluster. We recommend a minimum size of Standard_D3_v2 for your Azure Kubernetes Service (AKS) nodes. Spark is used for large-scale data processing and requires that Kubernetes nodes are sized to meet the Spark resources requirements.

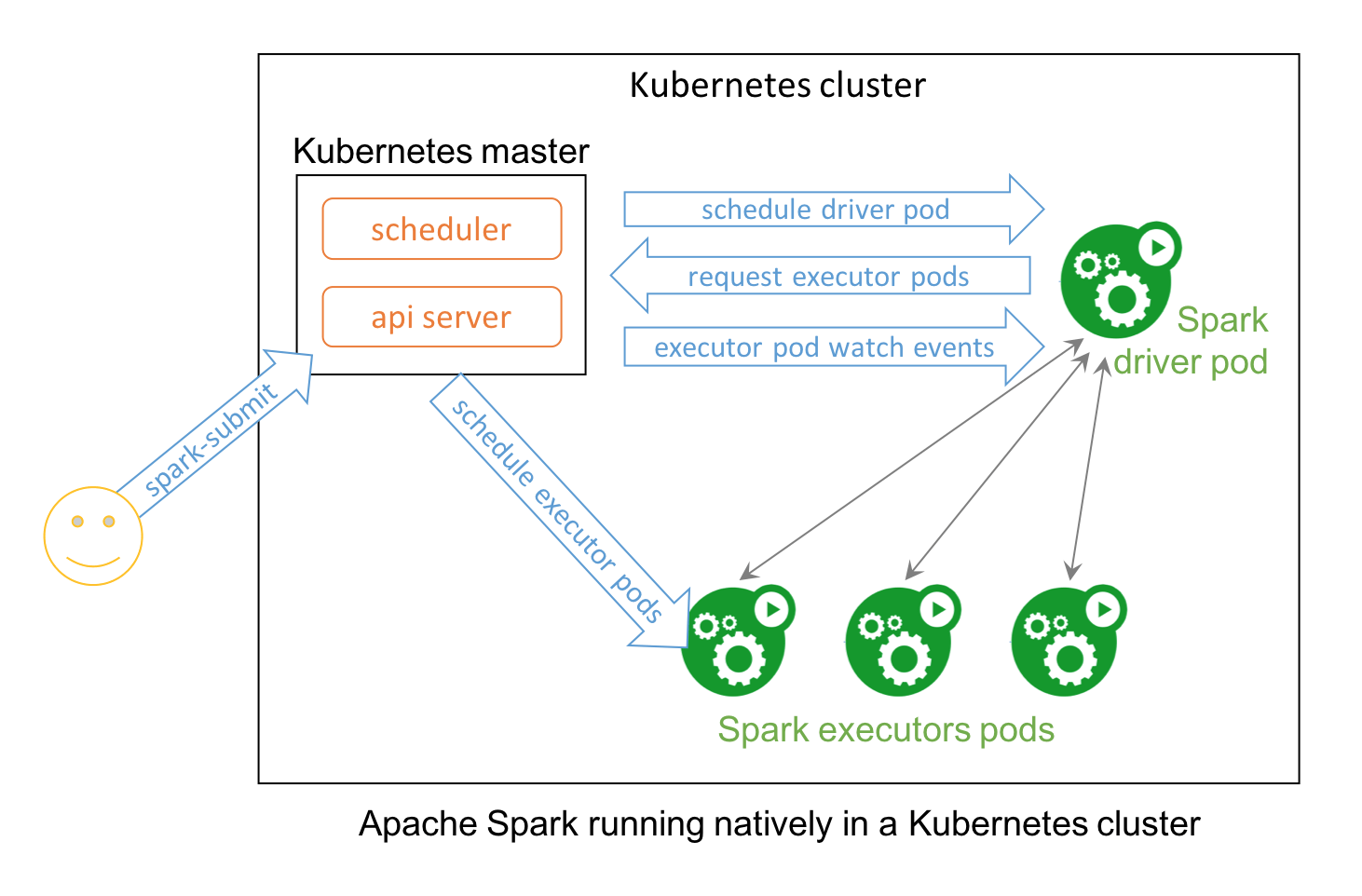

Git command-line tools installed on your system.SBT ( Scala Build Tool) installed on your system.Azure CLI installed on your development system.Docker Hub account, or an Azure Container Registry.Basic understanding of Kubernetes and Apache Spark.In order to complete the steps within this article, you need the following. This document details preparing and running Apache Spark jobs on an Azure Kubernetes Service (AKS) cluster. Azure Kubernetes Service (AKS) is a managed Kubernetes environment running in Azure. As of the Spark 2.3.0 release, Apache Spark supports native integration with Kubernetes clusters. Apache Spark is a fast engine for large-scale data processing.